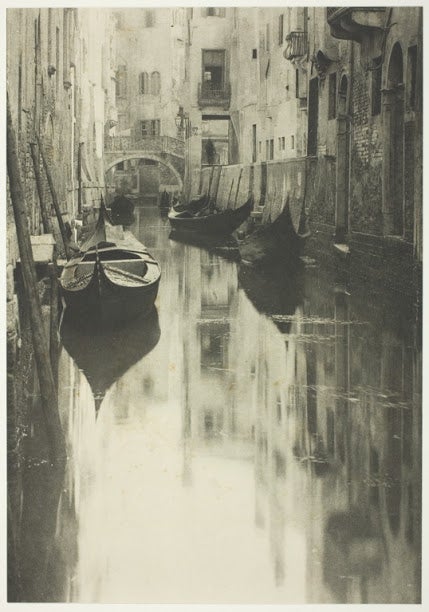

I’m not sure why anyone would, except by accident, but if one Googles “when did photography become an art”, the automated answer that is spat out is “1940s”. If we find something odd about this answer, we should not make the mistake of interrogating the answer itself but the method by which the automated question answering faculty of Google’s search function finds this answer. Obviously the answer is counter-intuitive and basically wrong by any reasonable definition we might use, and if I were to ask a human being this question, and they answered in this way, questioning their reasoning or methodology would be an entirely natural response. What might we expect this person to answer, though? Anyone who confidently asserts that photography became an art in the 1940s probably has a worldview worth surveying. They might say:

This quotation comes from an article to be found on photoportrayal.com which details a brief episode in the history of photography concerning the gallery exhibition of some works by Alfred Stieglitz and Ansel Adams. It’s not an especially well written or insightful piece—it includes the genuinely unsubstantiated sentences: “Photographers long before Alfred Stieglitz considered themselves to be artists, but the rest of the world didn't share these views” and “His work has supported the early environmental movement in the US and was just aesthetically perfect”—but contrary to its title the piece doesn’t really seem interested in the question of how photography became art. Its principal interest isn’t even really when, it is most concerned with who—the thing is a vehicle for an exposition of Stieglitz and Adams.

The Google search situation, in which answers to poorly formulated questions are given according to inscrutable data processing criteria, illustrates how philosophy becomes important to methodology of asking questions. This is the realm of epistemology. Philosophy of X implies a sort of survey of generalities regarding X: usually in the form of various critiques of methodology. Philosophy of Science is rarely concerned with concrete pieces of empirical observation, except as examples to illustrate generalities. Philosophy of art and history ought to be the same way—philosophy of computing, without a robust and general critique of the epistemology of these queries, produces nonsense. If you’re not convinced that this matters, because it’s just a badly formulated question about something that doesn’t matter much to anything with any material bearing, then it’s probably important to say that we are not far away from letting these systems educate our kids, or inform our doctors. That said, we shouldn’t need to consider doomsday scenarios for questions about epistemology, methodology and generality to matter to us.

The question “when did photography become an art” is badly formulated because “art” is badly defined, pretty much always. One of the ways of rephrasing that question is “when was photography treated as being similar to painting by art galleries?” This is a laughably more narrow question, of course. It’s the basis of the answer given in the photoportrayal.com article.

“Photographers long before Alfred Stieglitz considered themselves to be artists, but the rest of the world didn't share these views. Stieglitz was the first photographer for whom photography made it into art galleries in the 1900s. He founded the very first gallery himself and it was called “The Little Galleries of the Photo-Secession” in 1905 and then “291” from 1908. It was this gallery that exhibited photographic works alongside other artworks like sculptures and paintings and was the first place where photography received the same status as other forms of art. Stieglitz, of course, exhibited his own images too, which has shown that photographs can not only do what paintings can through their composition and quality, but they are a unique work of art in their own right.”

Sharp eyed readers might notice that Stieglitz’s photos made it into the galleries in the 1900s, not the 1940s. The 1940s, in the end, was a pretty arbitrary answer that marks the founding of the Photography Department of the Museum of Modern Art, New York. That Google had hit upon this little piece of information, buried near the end of an anonymous article published online, is perhaps the strangest thing about the whole affair. It might very well have answered, according to the The Mayer-Pierson Case, that photography became an art in 1862.

By January, 1862, Louis Frederic Mayer and Pierre-Louis Pierson had been in partnership for a number of years and their “Mayer et Pierson” studio was Paris’ foremost producer of “society photographs”, portrait photos of the leading figures of the bourgeoisie and aristocracy. In 1862, they brought a legal case against two rival firms that had been bootlegging their images and reselling them, according to the anarcho-capitalist spirit of the legendary Laissez-faire. The crux of the case depended on the protection of the photographs under the French revolutionary legal codes that protected works of art from plagiarism, and once the works had legally been declared art, that was apparently that. It should be remembered then that questions about what art is, and who it is made by, are always questions that have to account for the bourgeois hegemony that still dominates questions of art. That is, they are often just questions about property rights and the people who are allowed to profit by their sale.

The algorithm Google uses to generate the answers to questions one gives it is not completely understood (it’s a corporate secret) but it is driven by big data, the capability to generate systematic analysis of large amounts of data, and deep learning, a multi-layered training system for generating neural networks. Large data sets and the processing models developed to handle those sets, when combined with the neural architectures of deep learning, produce powerful systems for analysing text, such that questions posed to a search engine can be answered coherently. When I first used Google as a small child, I learned that asking specific questions was less likely to give me useful information than just searching for keywords. Less computer literate users likely never made this discovery, so Google expanded its search function. A simple language modelling neural network looks at the important articles, prepositions, and conjunctions of the query, fills in a set of constants in its rudimentary understanding of the question’s structure with keywords, and formats the results of its search according to pages which purport to answer questions.

Coherent question answering doesn't necessarily mean answering correctly. It isn’t really true by any reasonable metric that photography became an art in the 1940s, but the power of these tools isn’t that they can produce right answers all the time, but that they can be used to produce systems that approximate an understanding of a question. Google’s search engine, when a question is posed to it, can trawl its vast data centres for answers that have a meaningful correspondence this question. When problems appear, they are usually due to poorly formulated questions—questions which contain too much ambiguity. There is no “methodological inquiry” heuristic in these machine learning systems. But then again, many humans don’t have a very robust way of discerning generalities and parsing these ambiguities either.

Critiques of big data, and artificial intelligence driven deployments of systems using that data, are somewhat in vogue right now. This is unambiguously a good thing. For instance, society as a whole depends on the relentless vigilance of anti-racist activists to draw attention to racist biases in deep learning, to give just the most visible example of this phenomenon. I would be perfectly happy to end this piece on the eerie implication that our aesthetics could be irrevocably stained with questions about property rights. If this were a critique of the deeply repulsive new fad of non-fungible tokens, I would do just that, but this is a piece about epistemic methodology and generality, and there is a more existential danger lurking behind the prevalence of big data and deep learning practises.

In the science-fiction of Isaac Asimov, there exist the Three Laws of Robotics. These are self-evidently ridiculous—within the stories themselves they are designed to go wrong—but they are a useful device in illustrating a problem with AI alignment. The first law is “A robot may not injure a human being or, through inaction, allow a human being to come to harm.” How well formulated is this question? To what extent does it invite the sort of problems exemplified in the question of photography? There are various obvious problems with the notion of “harm” that are explored in Asimov’s stories, but we needn’t move into the literary richness of the verbs of the sentence in order to produce deeply problematic misunderstandings. The nouns suffice. What is a human? Is this a simple question? To the white property owners of the 18th century, “human” was not a class of being that included Africans. To the Nazis, it does not include Jews.

What ambiguities are left for us? There is the question of unborn people. Is a foetus a human? What about the billions of humans already dead? If a robot is expected to resuscitate someone whose heart has stopped, but who has residual brain activity, then what definition do we have that safeguards people in comas? What about the billions of humans yet to be born? What Nick Bostrom calls our cosmic endowment: a cohort of hypothetical people who, if we are to include them in our utilitarian calculus, produce a Pascal’s Wager type argument. How are we to weigh the lives, the wellbeing, the personal dignities, of a few billion marginalised people on the Imperial periphery of our present Earth against the prospects of a quintillion unborn colonial babies? What possible ethical reason does an arithmetic utilitarian calculus have for holding back on the wholesale destruction of cultures, ecosystems, and flesh and blood, if it can be demonstrated that funnelling resources into our interstellar machinations might bring about some glorious utopia?

The galactic propagation of our species, taken as an inherently good thing, does not preclude the possibility of an economic and political mode modelled on indentured labour, serfdom, and colonialism. It does not preclude a billion years of neo-feudalist rule by holders of capital, as they exclusively are made immortal by the science of a post-scarcity mode of production which propagates its measures of subjugation by the enforcement of artificial scarcity on the lower orders. The trunk of this future is already visible.